What "AI governance" actually means for a financial services architecture team

Model registries, data boundaries, approval gates, and why the best governance is the kind nobody notices.

Your CISO sends a memo: “We need AI governance.” Your CTO nods. Someone creates a Confluence page titled “AI Governance Framework.” A committee forms. Meetings happen. Six months later, you have a 40-page policy document that nobody reads and a shadow AI problem that’s gotten worse, not better.

I’ve watched this play out at 3 different financial services organizations. The pattern is consistent. The fix is too.

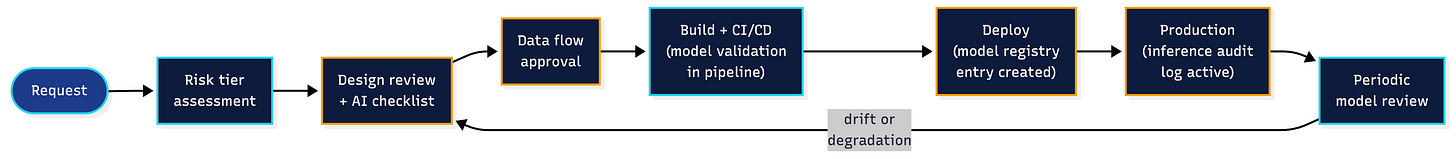

AI governance for an architecture team comes down to 4 controls: model inventory, approval gates, data boundary enforcement, and audit trail. Get those 4 right and you’re ahead of 90% of your industry. Get them wrong (or skip them entirely) and the regulator will find the gap before you do.

But here’s the part that takes most teams years to figure out: the best version of governance is invisible. It’s embedded so deep into your existing engineering processes that developers go through it without knowing they’re being governed. That’s the vision. It’s also extremely hard to get there.

The 4 controls

Model inventory

What’s deployed, where, who owns it, what version is running. Sounds basic. Most organizations can’t answer this question across teams. Someone in fraud is running a fine-tuned model they containerized 4 months ago. Someone in document processing is calling an external API endpoint the security team hasn’t assessed. The inventory is the foundation and it’s usually incomplete.

If you run a CMDB or service catalog, add AI components to it. Same schema, same ownership fields, same lifecycle tracking. A model is an integration. Treat it like one.

Approval gates

Every AI component goes through the same architecture review process as any other system change. Same intake form. Same risk tiering. You add 3 or 4 AI-specific questions to the template: What data does the model see? Is it an external API or self-hosted? Can you reproduce a given output from a given input? What’s the rollback plan?

The mistake is building a separate approval track for AI. I’ll come back to this.

Data boundary enforcement

Which data can flow to which model endpoint. PII to an external LLM API is a different risk profile than anonymized transaction metadata to a self-hosted classifier. Your data classification scheme already exists (if you’re in FinServ, your regulators made sure of that).

Extend it. Map every AI data flow against it. Flag anything that crosses a boundary without explicit approval.

Audit trail

Every inference logged, traceable, reproducible. “The model said X” isn’t an audit trail. “Model version 2.3.1, deployed on node Y, received input Z at timestamp T, produced output W with confidence score C” is an audit trail.

In a regulated environment, you need to reconstruct any decision the model participated in. OCC and FFIEC guidelines on model risk management (SR 11-7) have been clear about this since 2011. AI didn’t change the requirement. It changed the volume.

When it works

Governance works when teams don’t experience it as governance.

This is a platform engineering problem. The best platform teams build paved roads: CI/CD pipelines, deployment templates, service scaffolds. Developers pick the template, fill in their specifics, and ship. The guardrails are baked into the path of least resistance. Nobody thinks about them. They just use the thing that’s easy.

AI governance should work the same way. Your architecture review template already has sections for security, data classification, and operational readiness. Add the AI-specific questions there. Same with your CI/CD pipeline: linting, tests, and security scans are already running. Model validation checks belong in that same pipeline.

And the service catalog that tracks components and owners? Add model entries.

When a developer picks up the standard deployment template and it already includes model versioning, inference logging, and data flow declarations, that’s governance. They didn’t attend a governance meeting. They didn’t read a 40-page policy. They filled in 4 extra fields on a form they were already filling in.

Getting to this state is hard. Really hard. It requires tight coordination between your architecture team, your platform team, and your security team. Most organizations won’t get all the way there, and that’s fine.

But this is the vision you should build toward. Measure your progress against it. Every step closer is a step away from the alternative: shadow AI or governance theater.

When it fails

Two failure modes. I’ve seen both up close.

Failure mode 1: no governance at all (shadow AI)

At one organization, a team deployed an AI-assisted document classification system without going through architecture review. The business case was strong, the team was capable, and they didn’t want to wait 6 weeks for the review board. So they shipped it.

It worked fine for about 2 months. The model was classifying incoming correspondence and routing it to the right department. Accuracy looked good. Nobody complained.

Then an internal audit flagged it. The auditors asked 3 questions the team couldn’t answer: Which version of the model produced the classification for document X on date Y? What PII fields does the model have access to? Where is the data flowing (on-prem or external)?

The model was calling an external API. Customer names, account numbers, and correspondence content were leaving the network boundary on every request. Nobody had done a data flow assessment.

Nobody had checked whether the API provider’s data handling met the organization’s regulatory requirements either. The provider’s terms of service allowed them to use submitted data for model training. In financial services, that’s a finding that gets escalated fast.

The fix took 3 weeks. Model registry entry. Data flow diagram. Inference logging bolted on after the fact. Vendor risk assessment. A rollback plan that should have existed on day 1. The model itself barely changed. The controls around it changed completely.

The team didn’t do anything malicious. They shipped a good solution and skipped the boring parts. The boring parts turned out to be the parts the regulator cares about.

Failure mode 2: the parallel SDLC

A financial services organization decided to take AI seriously. Good instinct. They stood up a dedicated AI Center of Excellence with its own leadership, its own budget, and a mandate to “do AI governance right.”

The CoE started building. An AI-specific intake process. An AI-specific review board. AI-specific deployment checklists. An AI-specific risk assessment template.

They were thorough. Every artifact was polished. Every process was documented.

About 8 months in, the problems started surfacing. Application teams deploying an AI component had to go through 2 full review cycles: the standard architecture review and the AI governance review.

The AI governance review had its own queue, its own reviewers, its own SLAs. A deployment that used to take 2 weeks was now taking 5.

Teams started getting frustrated. Some started routing around the AI review (”it’s a rules engine with a small ML component, does it really need the full AI process?”). Others just stopped proposing AI projects because the overhead wasn’t worth it. The CoE had built, without intending to, a parallel SDLC. Every process the engineering organization already had, the CoE had rebuilt from scratch with “AI” in the title.

To their credit, the team recognized what happened and fixed it. They disbanded the separate review board, folded AI-specific checks into the existing architecture review, and embedded their AI risk expertise into the platform team’s standard templates. Time to deployment came back down. Adoption went up.

But this pattern repeats across large enterprises. AI gets treated as something so special, so different, that it needs its own everything. The intent is good. The effect is the opposite: more friction, more shadow AI.

These are growing pains. Organizations go through them and learn. But if you’re reading this before you’ve set up your governance model, you can skip the pain and directly implement the findings from this particular lesson.

The architecture team’s checklist

Before you approve any system that includes an AI component, get answers to these:

- Model registered in service catalog with version, owner, and deployment location?

- Data flow mapped against your classification scheme?

- External API endpoints risk-assessed by security?

- Inference logging in place (input, output, model version, timestamp)?

- Rollback plan documented?

- AI-specific questions answered in the standard architecture review (not a separate review)?

- Success criteria defined and testable before deployment?

- Periodic model review scheduled (drift detection, performance monitoring)?

Any blank here means the design isn’t done. Push it back.

The pattern underneath

AI governance is an architecture problem. The policy team can write principles all day. The compliance team can build checklists. But the architecture team is the one that decides where the controls actually live in the system. And the best place for them to live is inside the processes your teams already follow.

Four controls: inventory, gates, boundaries, audit. Embed them in your existing SDLC. Make them the path of least resistance. Build toward invisible governance and measure how close you get.

Most teams start with either too little (shadow AI) or too much (parallel SDLC). The target is in the middle, and it shifts as your organization matures. That’s fine. Start somewhere. The regulator would rather see an imperfect framework that’s actually running than a perfect policy document that nobody follows.

---

Layer Zero is a Principal Enterprise Architect at a Financial Services company. Pulse & Pattern publishes every Tuesday and Friday at [pulseandpattern.substack.com](https://pulseandpattern.substack.com).